NVIDIA Isaac ROS: The Essence of Visual Perception Technology Transcending the Limits of Autonomous Navigation

1. Introduction: Deterministic State Estimation in Unstructured Environments

The success of autonomous navigation in robotics research depends on how precisely a robot can estimate its state on non-idealized, non-static manifolds. However, real-world environments differ significantly from controlled laboratory settings. Stochastic sensor noise, accumulating drift, and computational latency—which undermines the determinism of real-time control loops—pose formidable barriers to traditional geometric SLAM.

NVIDIA Isaac ROS overcomes these physical limitations through the tight integration of software algorithms and hardware acceleration. Focusing on the Isaac ROS 3.2 release, this paper analyzes key insights into how accelerated computing architectures are redefining perception technology in modern robotics.

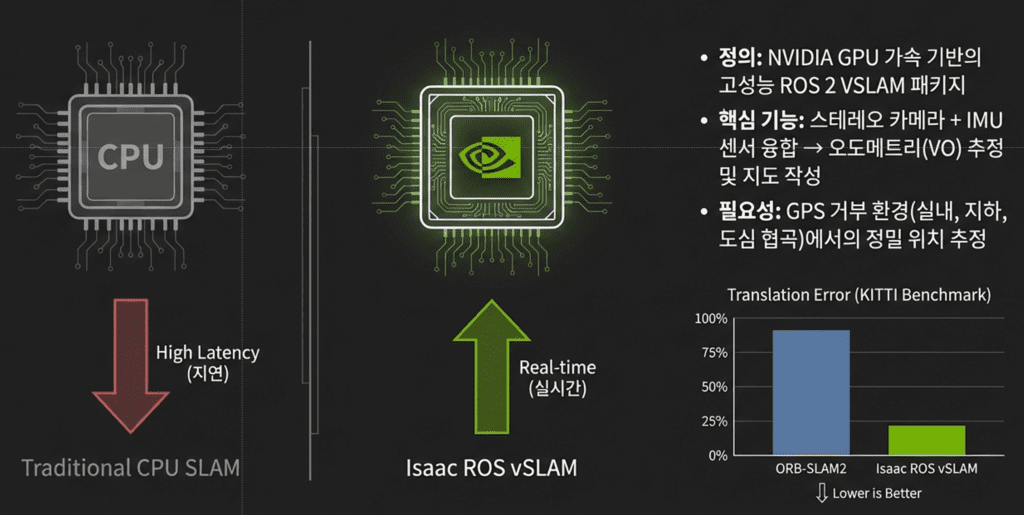

2. [Takeaway 1] The 250 FPS Innovation: Stability of Control Loops Realized by cuVSLAM

For high-speed mobile robots or drones, the frequency of pose estimation is not merely a numerical value; it is a metric that determines the ‘tightness’ of the control loop. Isaac ROS’s cuVSLAM demonstrates overwhelming real-time performance on the Jetson AGX Xavier platform.

Performance Metrics Analysis (KITTI Visual Odometry 2012 Benchmark)

- Runtime: 0.007s (Max. 250 FPS)

- Translation Error: 0.94%

- Rotation Error: 0.0019 deg/m

- Comparison (ORB-SLAM2): Runtime 0.06s / Translation Error 1.15% / Rotation Error 0.0027 deg/m (x86_64 2 cores @ >3.5GHz)

The key expert perspective to note here is that this performance is not simply a result of a faster GPU. Accelerated computing enables the matching of a significantly higher number of keypoints in real time, thereby minimizing the overall Reprojection Error. Furthermore, the Zero-copy data transfer enabled by the NITROS (NVIDIA Isaac Transport for ROS) architecture drastically reduces latency, optimizing the entire pathway from sensor data acquisition to the execution of control commands.

3. [Takeaway 2] The RealSense Paradox: Resolving Sensor Interference through 60Hz Interleaving

The Intel RealSense D400 series utilizes an active stereo projector to generate high-quality depth information. Paradoxically, however, this projection pattern acts as noise that disrupts feature point extraction for VSLAM.

“The RealSense D400 series uses an inbuilt optical projector to achieve active stereo vision for improved depth quality. However, the emitted pattern visible on the stereo IR images disrupts VSLAM performance.”

Isaac ROS cleverly resolves this issue through the ‘RealSense Splitter’ node. The core mechanism lies in an interleaving method that toggles the emitter state at a 60Hz frequency. The Splitter node detects metadata to categorize and distribute frames: frames with the emitter turned off are routed to VSLAM, while high-quality depth frames with the emitter turned on are directed to nvblox for mapping. This approach simultaneously achieves the conflicting goals of pose estimation precision and mapping accuracy.

——————————————————————————–

쿼드(QUAD) 드론연구소의 기술적 전문성을 바탕으로, 로봇의 위치 소실 문제 해결을 위한 계층적 인덱싱과 알고리즘적 특징을 강조하여 영문으로 번역해 드립니다.

4. [Takeaway 3] cuVGL: Hierarchical Indexing Resolving the ‘Kidnapped Robot Problem’

Global Localization—finding a robot’s position when it completely loses its location (the Kidnapped Robot Problem) or re-enters a previously visited large-scale environment—remains an extremely challenging task. cuVGL (CUDA-accelerated Visual Global Localization) from the Isaac Mapping package addresses this through SIFT feature extraction and a BoW (Bag of Words) mechanism.

From an academic perspective, the dual approach to Vocabulary generation is particularly noteworthy:

- KMeans: Utilized when building a completely new visual vocabulary from scratch.

- BIRCH (Balanced Iterative Reducing and Clustering using Hierarchies): Efficient for the incremental build or refinement of existing vocabularies within large-scale environments.

Furthermore, the VisualGlobalLocalizationNode manages the coordinate transformation between the vmap (map_frame) and base_link (base_frame). Users can tune the trade-off between Precision and Recall for specific research objectives using the vgl_localization_precision_level parameter (ranging from 0 to 2).

——————————————————————————–

5. [Takeaway 4] The Philosophy of Multi-sensor Fusion: ‘Diversity’ as a Response to Physical Defects

A single-sensor system inevitably encounters physical limitations. NVIDIA’s design philosophy is rooted in ‘Diversity,’ which involves combining sensors based on different physical principles.

- Responding to Physical Failure Modes: Single odometry systems collapse in scenarios such as when dark surfaces absorb LiDAR light sources or when wheels slip on low-friction ground.

- VIO (Visual-Inertial Odometry): In featureless environments like monochromatic walls, VIO integrates IMU data to prevent discontinuities in pose estimation.

- Fault Detection Architecture: By simultaneously operating three or more independent estimators—such as VSLAM, LiDAR, and wheel odometry—the system gains the robustness to statistically detect and isolate errors from a specific sensor.

——————————————————————————–

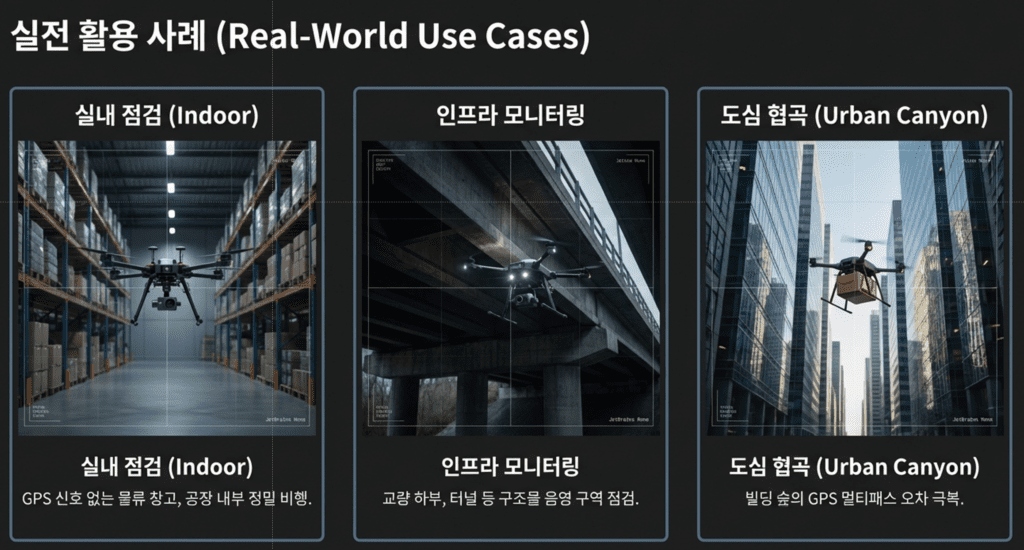

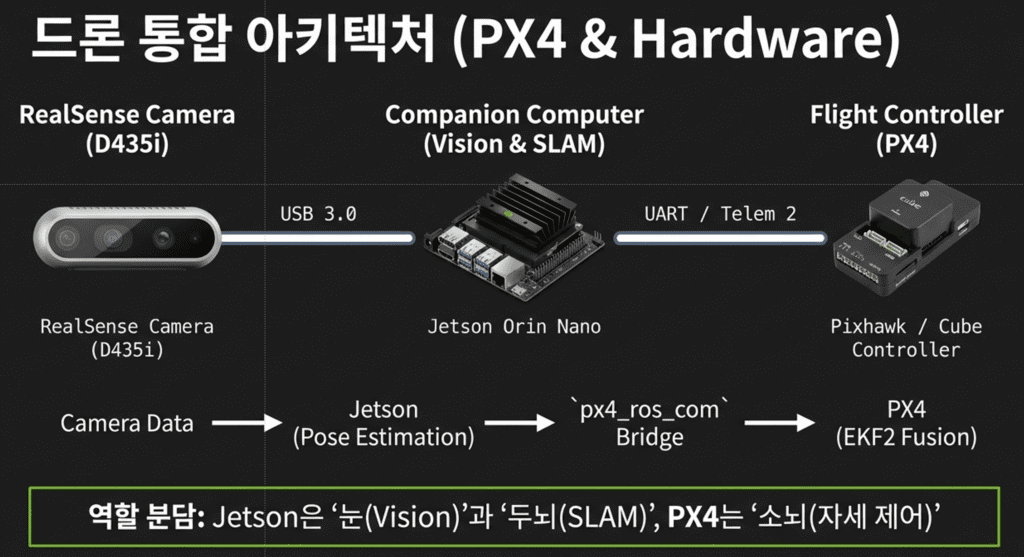

6. [Takeaway 5] Integrated Architecture for Autonomous Flight and Mobile Platforms

The technical essence of Isaac ROS extends beyond ground robots like Nova Carter into the autonomous flight architecture of QUAD (Drone Research Lab). With the recent late-2024 updates (3.2 release), the scalability of multi-camera setups has been dramatically improved. This means the surrounding omnidirectional view of a robot can now be accelerated and processed within a unified coordinate system. Especially in drone platforms, the Micro-XRCE-DDS Agent is utilized to bridge the ROS 2 and PX4 communication layers. This enables GPU-accelerated VSLAM data to be supplied to the Flight Controller (FC) in real time—even during high-speed maneuvers—facilitating more precise autonomous flight.

——————————————————————————–

7. Conclusion:

Beyond the Physical Limitations of Sensor Data NVIDIA Isaac ROS demonstrates that evolving a robot’s ‘eyes’ is not simply about using superior sensors. The true essence of robotics engineering lies in an architecture that overcomes RealSense pattern interference through 60Hz interleaving, accelerates massive BoW indexing via the GPU, and compensates for physical sensor defects through statistical fusion.

We must now ask: To what extent can the physical limits of sensor data and the constraints of computational resources be surpassed by hardware-accelerated algorithms? Isaac ROS is already providing a powerful answer to autonomous navigation’s most difficult challenge: real-time deterministic state estimation.

Author: maponarooo, CEO of QUAD Drone Lab

Date: February 7, 2026

![PX4 MAVSDK – C++ Programming [Episode 5] Querying System Information and Using Telemetry](https://quad-drone-lab.co.kr/wp-content/uploads/2026/03/0315_인포그래피-768x512.png)

![[MAVSDK C++ Part 4] Building Your Own App: Project Setup and Drone Connection](https://quad-drone-lab.co.kr/wp-content/uploads/2026/03/0314_인포그래피-768x512.png)

![[PX4 Tuning Series 7] Land Detector Configuration: The Essential Guide for a Perfect Flight Conclusion](https://quad-drone-lab.co.kr/wp-content/uploads/2026/03/Drone-Ground-Contact-Landing-Logic-768x429.png)

![PX4 MAVSDK – C++ Programming [Episode 10] Custom Logging and Integration Testing (gtest)](https://quad-drone-lab.co.kr/wp-content/uploads/2026/03/0320_인포그래피-768x419.jpg)

![[PX4 튜닝 시리즈 부록] 레이싱 드론 튜닝 가이드 (Racer Setup): 한계를 뛰어넘는 극강의 퍼포먼스](https://quad-drone-lab.co.kr/wp-content/uploads/2026/03/Racing-Drone-Minimalism-and-Balance-768x429.png)